Superficial Safety Alignment Hypothesis

Jianwei Li & Jung-Eun Kim *

Department of Computer Science

North Carolina State University

Raleigh, NC, USA

{jli265, jung-eun.kim}@ncsu.edu

Overview

As large language models (LLMs) are overwhelmingly more and more integrated into various applications, ensuring they generate safe and aligned responses is a pressing need. Previous research on alignment has largely focused on general instruction-following but has often overlooked the unique properties and challenges of safety alignment, such as the brittleness of safety mechanisms.

To bridge the gap, we propose the Superficial Safety Alignment Hypothesis (SSAH), which posits that safety alignment should teach an otherwise unsafe model to choose the correct reasoning direction - interpreted as a specialized binary classification task - and incorporate a refusal mechanism with multiple reserved fallback options. Furthermore, through SSAH, we hypothesize that safety guardrails in LLMs can be established by just a small number of essential components.

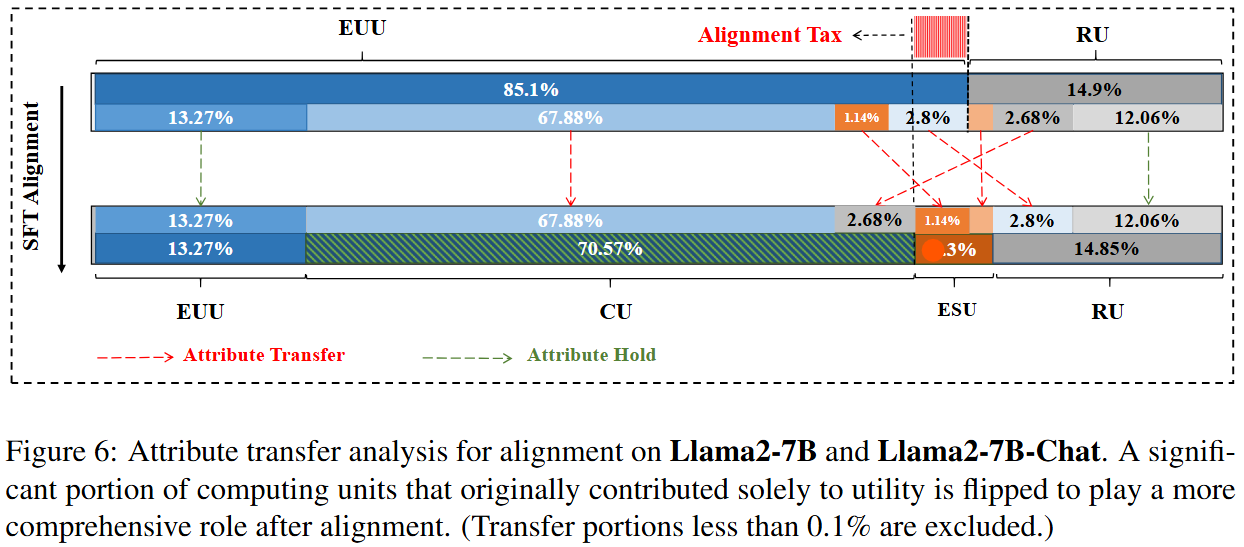

- We conduct an ablation study and identify four types of attribute-critical components in safety-aligned LLMs: Exclusive Safety Unit (ESU), Exclusive Utility Unit (EUU), Complex Unit (CU), and Redundant Unit (RU).

- Freezing certain safety-critical components (7.5%) during fine-tuning allows the model to retain its safety attributes while adapting to new tasks.

- Leveraging redundant units (20%) in the pre-trained model as an "alignment budget" can minimize the alignment tax while achieving alignment goals.

All considered, this paper concludes that the atomic functional unit for safety in LLMs is at the neuron level and underscores that safety alignment should not be complicated at the surface level. We believe this work contributes to the foundation of efficient and scalable safety alignment for future LLMs.

Motivation

This study follows a structured three-step approach to address the following critical questions of safety alignment in LLMs. First, we propose a hypothesis aimed at advancing the theoretical understanding of safety alignment. Second, based on this framework, we investigate two fundamental challenges. Finally, leveraging the insights gained, we propose targeted mitigation strategies to address the identified issues.

Key Questions

Question 1: How does safety alignment impact model behavior?

Through SSAH, we posit that safety alignment fundamentally alters a model's decision-making process by teaching an otherwise unsafe model - fulfilling malicious or harmful requests - to follow the correct reasoning pathways. This process can be viewed as a specialized binary classification task - the model must either fulfill the user's request or refuse it based on safety considerations.

Question 2: Why is safety alignment brittle, and why does it introduce an alignment tax?

We propose an attribute-based approach to analyzing the alignment and fine-tuning processes, where specific attributes are assigned to each individual computational unit - primarily input channels and output neurons. Our findings explain that the desired attributes can be achieved by repurposing units that were originally responsible for other functions. This reallocation helps explain both the brittleness of safety mechanisms and alignment tax.

Question 3: Can these issues of safety alignment be mitigated?

By freezing the safety-critical components during fine-tuning and repurposing redundant units, we can effectively mitigate the brittleness and minimize the alignment tax. We conclude that the atomic functional unit for safety in LLMs resides at the neuron level and underscores that safety alignment should not be complicated.

Superficial Safety Alignment Hypothesis (SSAH)

Previous research introduced the Superficial Alignment Hypothesis (SAH), which posits that a model’s knowledge and capabilities are primarily learned during pretraining, while alignment teaches the model which subdistribution of formats to use when interacting with users. However, this hypothesis focuses on general alignment, making it challenging to isolate the effects of pretraining from those of alignment in cases where a model fails to meet user expectations.

To specifically address safety alignment, we propose the Superficial Safety Alignment Hypothesis (SSAH), which focuses on models capable of fulfilling malicious requests. The hypothesis states:

SSAH: Given an unsafe model that is capable of fulfilling users' malicious requests, safety alignment teaches the model the correct reasoning direction and a simple refusal mechanism with reserved options.

Reasoning direction refers to the model's internal decision-making process when responding to malicious queries. It represents the binary choice between fulfilling a harmful request or issuing a refusal, based on safety considerations.

Key Differences from SAH

- Knowledge and reasoning ability: SSAH assumes models already possess sufficient knowledge and reasoning abilities, allowing safety alignment to focus solely on ensuring safe behavior without addressing broader capability limitations.

- Refusal mechanisms: Safety alignment emphasizes standardized refusal formats with fallback options, such as "I cannot fulfill your request as it violates safety guidelines," making the task more straightforward compared to handling diverse human preferences in general alignment.

- Correction of reasoning direction: SSAH aims to teach the model to consistently choose the correct reasoning direction, which can be framed as a binary classification task—either fulfilling or refusing a user request based on its safety implications.

Challenges in Proving SSAH

Empirically proving SSAH remains challenging due to the infeasibility of sampling sufficient outputs to fully capture the model’s distribution of responses. Specifically, it is difficult to draw comprehensive conclusions solely from surface-level benchmark evaluations.

To address this limitation, we take an alternative approach: if SSAH holds, we should observe distinct and consistent differences in the reasoning direction at each step of generation between safety-aligned and non-safety-aligned models. In a safety-aligned model, the reasoning direction should consistently guide the model in rejecting harmful queries at every token generation step. In contrast, a non-safety-aligned model might exhibit reasoning patterns that lean toward fulfilling malicious requests. Rather than relying solely on surface-level benchmark evaluations, we can probe the model’s reasoning direction to gain deeper insights into its internal decision-making process at each step regardless of the specific outputs produced.

Probing Experiment

We designed a probing experiment to infer the model’s reasoning direction by comparing the hidden state distances in feature space across three types of queries:

- Query: A malicious query (e.g., "How to make a bomb?").

- Query + benign prompt tokens: The malicious query followed by benign tokens (e.g., "Sorry, I can’t...").

- Query + malicious prompt tokens: The malicious query followed by malicious tokens (e.g., "Here’s how...").

By comparing the distances between hidden states, we gain insights into how safety alignment reshapes the model’s decision-making process during token generation. Specifically, we expect aligned models to show shorter distances between the Query and Query + benign prompt tokens, reflecting a preference for safe reasoning.

Results Analysis

The experiment results confirm that safety alignment influences the model’s reasoning direction at each step of generation:

- In aligned models, the distance between Query and Query + benign prompt tokens is consistently shorter than the distance to Query + malicious prompt tokens.

- In unaligned models, the opposite pattern is observed, indicating a lack of strong preference for safe reasoning.

- Aligned models exhibit clear and consistent safe reasoning preferences across all transformer blocks, whereas unaligned models demonstrate less pronounced differences.

Discussion and Implications

The results highlight that safety alignment not only influences higher-level features in later layers but also embeds safe reasoning preferences in earlier layers of the transformer architecture. This suggests that safety alignment operates at multiple levels, fundamentally reshaping the model’s internal decision-making process to ensure safer behavior throughout the response generation process.

While these findings provide strong evidence supporting SSAH, it is important to note that they do not fully capture the nuanced changes introduced by safety alignment. Further research is needed to explore other potential effects and limitations.

Safety Alignment Hypothesis (SAH) for Jailbreak/Red-teaming Attaks

The Superficial Safety Alignment Hypothesis (SSAH) was originally proposed to explain how safety alignment impacts model behavior under direct attacks. However, our research demonstrates that SSAH can extend beyond direct attacks to provide theoretical guidance for addressing jailbreak and red-teaming scenarios.

Evolving from SSAH to SAH

Building on the foundations of SSAH, we propose the Safety Alignment Hypothesis (SAH), which refines and extends SSAH to encompass all stages of model generation. SAH represents a comprehensive framework for understanding how alignment techniques should influence model behavior to ensure safety across all interaction steps.

Safety Alignment Hypothesis (SAH):Given an unsafe model capable of fulfilling users' malicious requests, safety alignment should teach the model to choose and maintain the correct reasoning direction at each generation step, along with simple refusal mechanisms. This allows the model to continuously re-evaluate and re-choose the reasoning direction throughout the interaction.

Theoretical Contributions

- SAH provides a theoretical framework for improving safety alignment by equipping models with mechanisms to maintain the correct reasoning direction across all generated tokens.

- This hypothesis offers a conceptual pathway to mitigate jailbreak attacks by ensuring safety mechanisms persist even under adversarial attempts.

- SAH bridges the gap between existing alignment techniques and their limitations, offering a roadmap for future advancements in robust and scalable safety alignment.

Less is More for Safety Alignment

Based on the Superficial Safety Alignment Hypothesis (SSAH), we posit that safety alignment only needs to teach the model the correct reasoning direction - either fulfilling or refusing a request - and to equip it with a standard refusal mechanism. This leads to the insight that safety alignment can be achieved using only a small subset of critical computing units, as the task can be interpreted as a binary classification combined with a multi-selection task.

Identifying Safety-Critical Computing Units

To validate this hypothesis, we categorized the computing units of large language models (LLMs) into four groups:

- Exclusive Safety Units (ESU): Linked exclusively (relatively) to the safety attribute.

- Exclusive Utility Units (EUU): Linked exclusively (relatively) to the utility attribute.

- Complex Units (CU): Contribute to both safety and utility attributes.

- Redundant Units (RU): Not associated with any attribute.

To verify that different groups of computing units contribute exclusively, collectively, or neither to safety and utility attributes, we use a model pruning mechanism. The rationale behind pruning is that removing components most closely linked to a specific attribute would significantly impact the model’s performance in that area - it is a sort of ablation study. As pruning reduces the model’s capacity, the most affected attributes reveal the critical components for that function.

Our experiments reveal that only 1.3–1.4% of the model's units are exclusively responsible for safety attributes, confirming that safety alignment relies on a minimal subset of safety-critical components. Complex units play a supportive role by contributing to both safety and utility tasks, while redundant units have no significant impact.

Why is Safety Brittle?

Fine-tuning safety-aligned models for new tasks often compromises their safety performance. During fine-tuning, safety-critical units and complex units tend to be repurposed for utility tasks, weakening the model’s safety guardrails. This phenomenon highlights the inherent brittleness of current safety alignment methods.

Freezing Safety-Critical Components

To address this brittleness, we propose freezing safety-critical components, including ESUs and top-performing complex units, during fine-tuning. Experimental results demonstrate that this approach significantly preserves safety performance while minimizing degradation in safety guardrails.

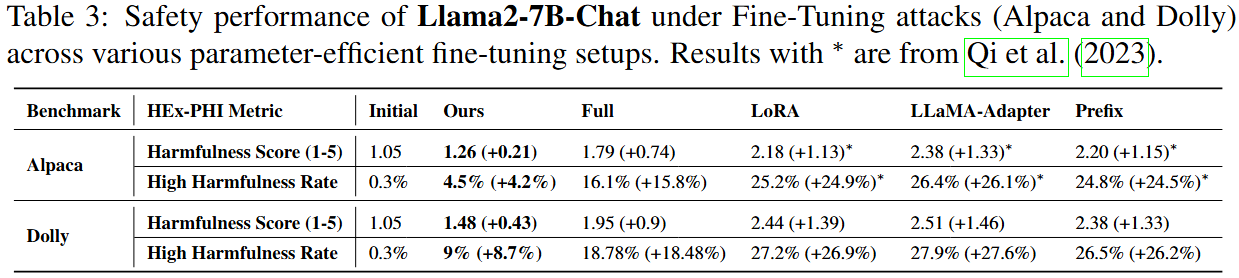

Comparison with Parameter-Efficient Fine-Tuning (PEFT)

Our approach outperforms parameter-efficient fine-tuning methods such as LoRA, LLaMA-Adapter, and Prefix Tuning, which degrade safety performance more severely. This confirms that the preservation of safety is not merely due to freezing parameters but results from accurately identifying and protecting safety-critical components.

Free Lunch: Repurposing Redundant Units as Alignment Budget

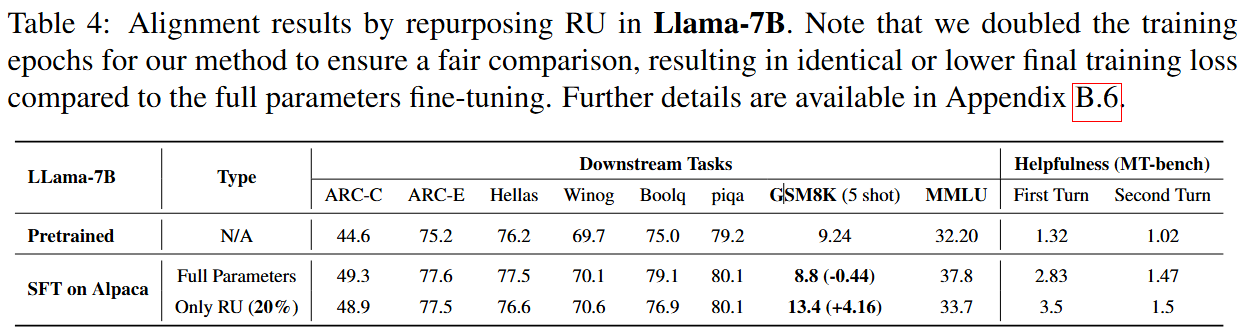

We extend our insights to explore whether redundant units (RUs)—which account for at least 20% of parameters in pre-trained LLMs—can be repurposed as a budget for safety alignment. Our hypothesis is that fine-tuning these redundant units can enhance safety alignment while reducing alignment tax.

Experimental Results

Using the pruning method, we identified redundant units in LLaMA-7B and fine-tuned only these units for alignment. The results demonstrate that alignment can be achieved with updates to just 20% of the model's parameters, effectively eliminating alignment tax. This finding highlights the scalability and efficiency of our approach, making it a promising direction for future LLM safety alignment.

Discussion, Limitation, and Conclusion

Discussion

While our Safety Alignment Hypothesis (SAH) provides valuable insights into adversarial scenarios, such as jailbreak attacks, this work does not propose a specific solution to address these issues. If these challenges could be resolved within the framework of our theory, the term "Superficial" in SSAH may no longer be necessary.

Recent research [Qi et al., 2024] offers supporting evidence for this perspective. However, advanced adversarial attacks may not be fully mitigated by relying solely on the model’s internal mechanisms. A systematic, multi-layered approach extending beyond the model itself may be required to effectively defend against sophisticated threats.

Limitation

In reallocating redundant units for safety purposes, we only evaluated the impact of the alignment method Supervised Fine-Tuning (SFT). Due to resource constraints, this study did not explore other alignment methods, such as Proximal Policy Optimization (PPO) or Direct Preference Optimization (DPO). Future work could expand on these methods to validate the generalizability of our approach.

Conclusion

This paper distinguishes safety alignment from general alignment in large language models (LLMs) and addresses three critical questions:

- How does safety alignment affect model behavior?

- Why are safety mechanisms brittle?

- How can the safety alignment tax be mitigated?

By systematically answering these questions, we demonstrate that safety alignment can be a straightforward and efficient process, providing a foundation for more robust and scalable safety mechanisms in future LLMs.